Topic Modeling Martha Ballard’s Diary

In A Midwife’s Tale, Laurel Ulrich describes the challenge of analyzing Martha Ballard’s exhaustive diary, which records daily entries over the course of 27 years: “The problem is not that the diary is trivial but that it introduces more stories than can be easily recovered and absorbed.” (25) This fundamental challenge is the one I’ve tried to tackle by analyzing Ballard’s diary using text mining. There are advantages and disadvantages to such an approach - computers are very good at counting the instances of the word “God,” for instance, but less effective at recognizing that “the Author of all my Mercies” should be counted as well. The question remains, how does a reader (computer or human) recognize and conceptualize the recurrent themes that run through nearly 10,000 entries?

One answer lies in topic modeling, a method of computational linguistics that attempts to find words that frequently appear together within a text and then group them into clusters. I was introduced to topic modeling through a separate collaborative project that I’ve been working on under the direction of Matthew Jockers (who also recently topic-modeled posts from Day in the Life of Digital Humanities 2010). Matt, ever-generous and enthusiastic, helped me to install MALLET (Machine Learning for LanguagE ToolkiT), developed by Andrew McCallum at UMass as “a Java-based package for statistical natural language processing, document classification, clustering, topic modeling, information extraction, and other machine learning applications to text.” MALLET allows you to feed in a series of text files, which the machine will then process and generate a user-specified number of word clusters it thinks are related topics. I don’t pretend to have a firm grasp on the inner statistical/computational plumbing of how MALLET produces these topics, but in the case of Martha Ballard’s diary, it worked. Beautifully.

With some tinkering, MALLET generated a list of thirty topics comprised of twenty words each, which I then labeled with a descriptive title. Below is a quick sample of what the program “thinks” are some of the topics in the diary:

- MIDWIFERY: birth deld safe morn receivd calld left cleverly pm labour fine reward arivd infant expected recd shee born patient

- CHURCH: meeting attended afternoon reverend worship foren mr famely performd vers attend public supper st service lecture discoarst administred supt

- DEATH: day yesterday informd morn years death ye hear expired expird weak dead las past heard days drowned departed evinn

- GARDENING: gardin sett worked clear beens corn warm planted matters cucumbers gatherd potatoes plants ou sowd door squash wed seeds

- SHOPPING: lb made brot bot tea butter sugar carried oz chees pork candles wheat store pr beef spirit churnd flower

- ILLNESS: unwell mr sick gave dr rainy easier care head neighbor feet relief made throat poorly takeing medisin ts stomach

When I first ran the topic modeler, I was floored. A human being would intuitively lump words like attended, reverend, and worship together based on their meanings. But MALLET is completely unconcerned with the meaning of a word (which is fortunate, given the difficulty of teaching a computer that, in this text, discoarst actually means discoursed). Instead, the program is only concerned with how the words are used in the text, and specifically what words tend to be used similarly.

Besides a remarkably impressive ability to recognize cohesive topics, MALLET also allows us to track those topics across the text. With help from Matt and using the statistical package R, I generated a matrix with each row as a separate diary entry, each column as a separate topic, and each cell as a “score” signaling the relative presence of that topic. For instance, on November 28, 1795, Ballard attended the delivery of Timothy Page’s wife. Consequently, MALLET’s score for the MIDWIFERY topic jumps up significantly on that day. In essence, topic modeling accurately recognized, in a mere 55 words (many abbreviated into a jumbled shorthand), the dominant theme of that entry:

"Clear and pleasant. I am at mr Pages, had another fitt of ye Cramp, not So Severe as that ye night past. mrss Pages illness Came on at Evng and Shee was Deliverd at 11h of a Son which waid 12 lb. I tarried all night She was Some faint a little while after Delivery."

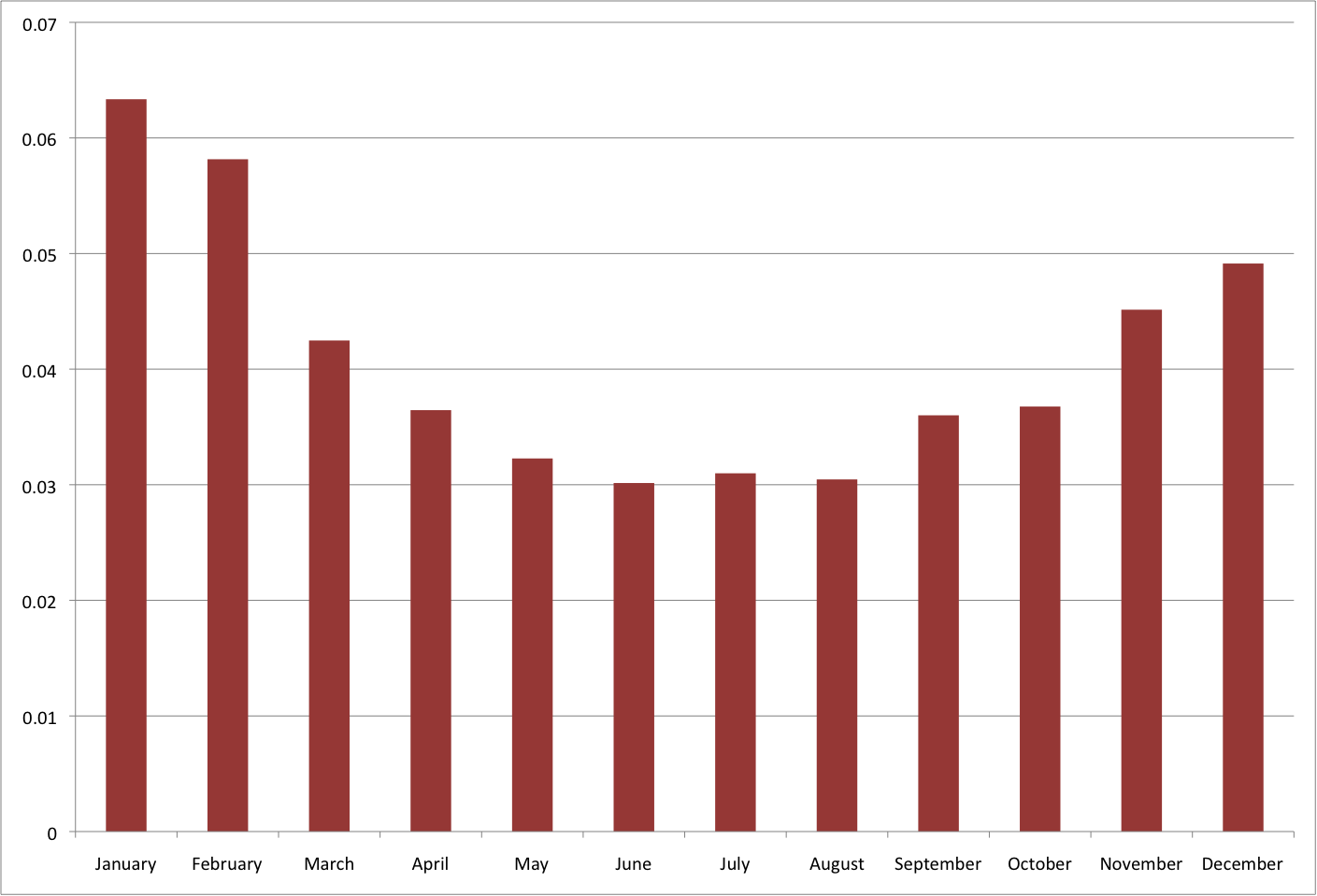

The power of topic modeling really emerges when we examine thematic trends across the entire diary. As a simple barometer of its effectiveness, I used one of the generated topics that I labeled COLD WEATHER, which included words such as cold, windy, chilly, snowy, and air. When its entry scores are aggregated into months of the year, it shows exactly what one would expect over the course of a typical year:

As a barometer, this made me a lot more confident in MALLET’s accuracy. From there, I looked at other topics. Two topics seemed to deal largely with HOUSEWORK:

-

house work clear knit wk home wool removd washing kinds pickt helping banking chips taxes picking cleaning pikt pails

-

home clear washt baked cloaths helped washing wash girls pies cleand things room bak kitchen ironed apple seller scolt

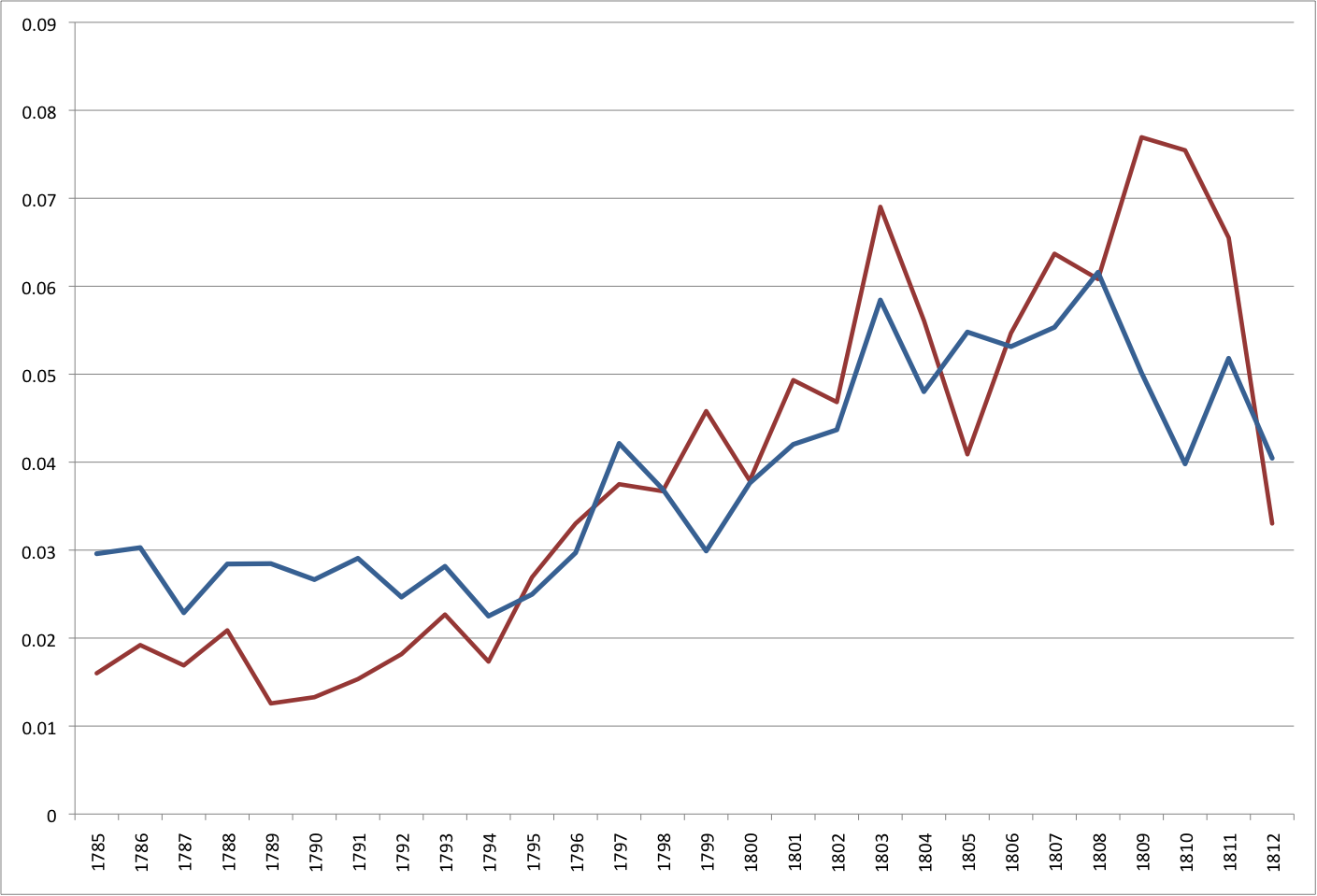

When charted over the course of the diary, these two topics trace how frequently Ballard mentions these kinds of daily tasks:

Both topics moved in tandem, with a high correlation coefficient of 0.83, and both steadily increased as she grew older (excepting a curious divergence in the last several years of the diary). This is somewhat counter-intuitive, as one would think the household responsibilities for an aging grandmother with a large family would decrease over time. Yet this pattern bolsters the argument made by Ulrich in A Midwife’s Tale, in which she points out that the first half of the diary was “written when her family’s productive power was at its height.” (285) As her children married and moved into different households, and her own husband experienced mounting legal and financial troubles, her daily burdens around the house increased. Topic modeling allows us to quantify and visualize this pattern, a pattern not immediately visible to a human reader.

Even more significantly, topic modeling allows us a glimpse not only into Martha’s tangible world (such as weather or housework topics), but also into her abstract world. One topic in particular leaped out at me:

feel husband unwel warm feeble felt god great fatagud fatagued thro life time year dear rose famely bu good

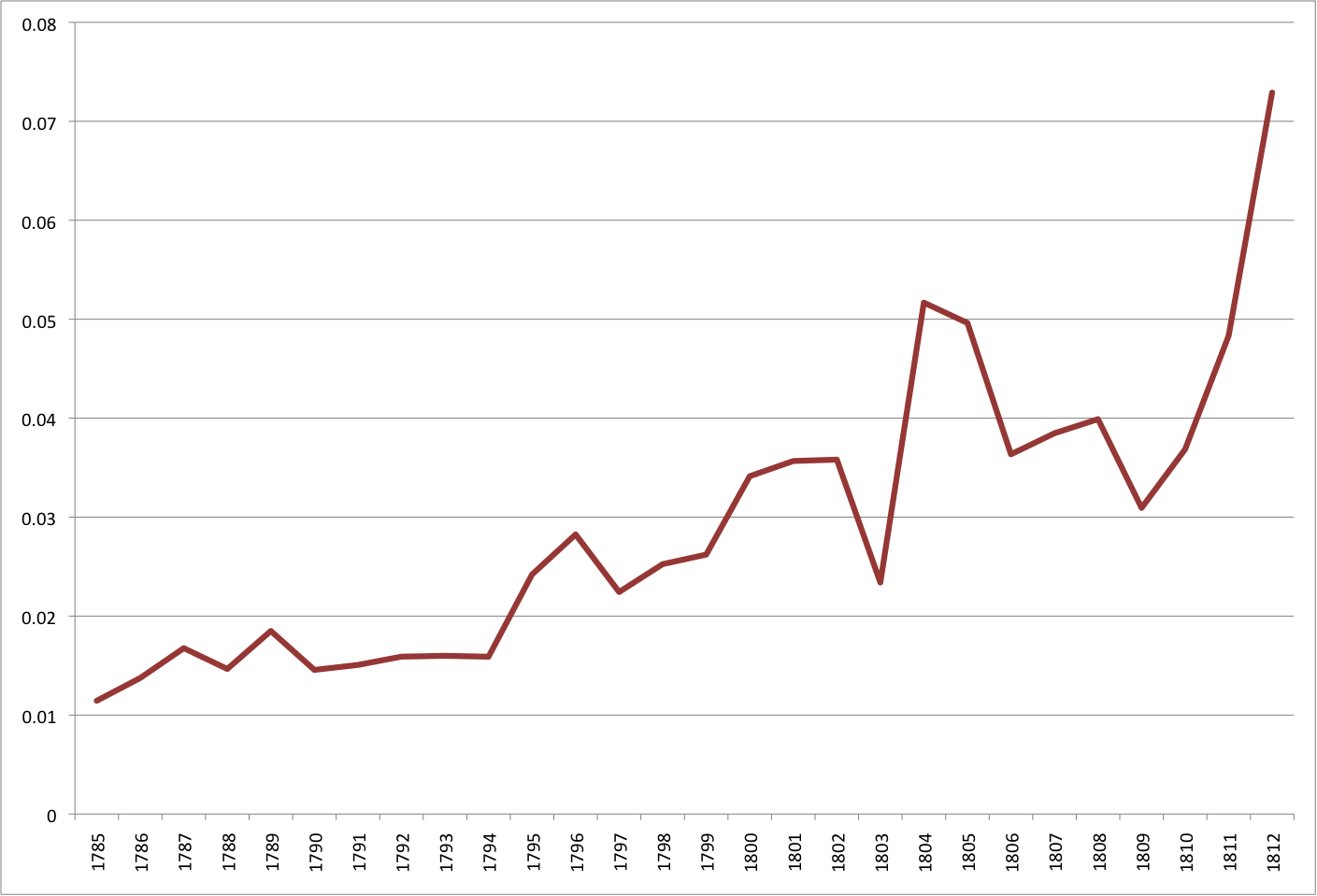

The most descriptive label I could assign this topic would be EMOTION - a tricky and elusive concept for humans to analyze, much less computers. Yet MALLET did a largely impressive job in identifying when Ballard was discussing her emotional state. How does this topic appear over the course of the diary?

Like the housework topic, there is a broad increase over time. In this chart, the sharp changes are quite revealing. In particular, we see Martha more than double her use of EMOTION words between 1803 and 1804. What exactly was going on in her life at this time? Quite a bit. Her husband was imprisoned for debt and her son was indicted by a grand jury for fraud, causing a cascade effect on Martha's own life - all of which Ulrich describes as "the family tumults of 1804-1805." (285) Little wonder that Ballard increasingly invoked "God" or felt "fatagued" during this period.

I am absolutely intrigued by the potential for topic modeling in historic source material. In many ways, it seems that Martha Ballard's diary is ideally suited for this kind of analysis. Short, content-driven entries that usually touch upon a limited number of topics appear to produce remarkably cohesive and accurate topics. In some cases (especially in the case of the EMOTION topic), MALLET did a better job of grouping words than a human reader. But the biggest advantage lies in its ability to extract unseen patterns in word usage. For instance, I would not have thought that the words "informed" or "hear" would cluster so strongly into the DEATH topic. But they do, and not only that, they do so more strongly within that topic than the words dead, expired, or departed. This speaks volumes about the spread of information - in Martha Ballard's diary, death is largely written about in the context of news being disseminated through face-to-face interactions. When used in conjunction with traditional close reading of the diary and other forms of text mining (for instance, charting Ballard's social network), topic modeling offers a new and valuable way of interpreting the source material.

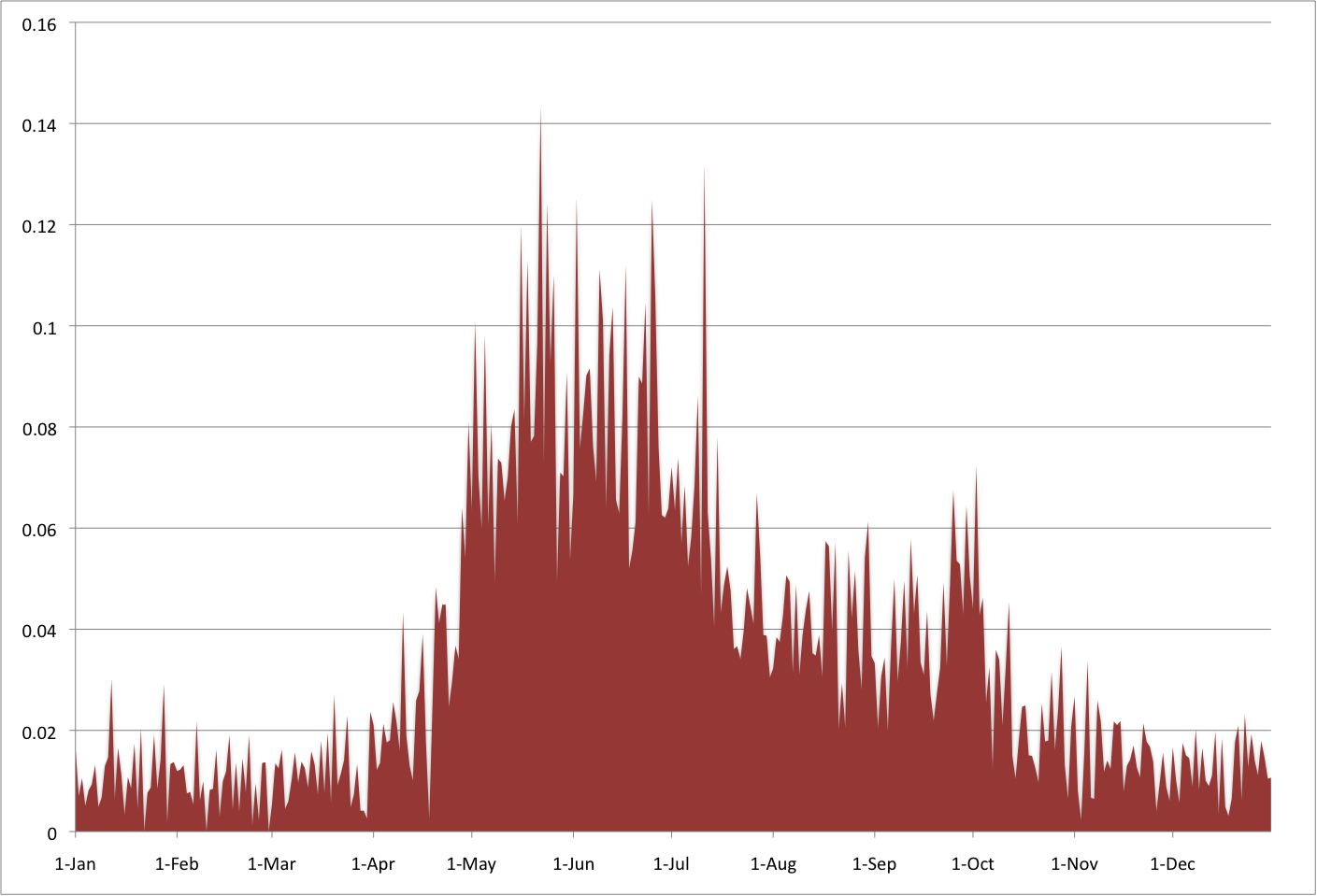

I'll end my post with a topic near and dear to Martha Ballard's heart: her garden. To a greater degree than any other topic, GARDENING words boast incredible thematic cohesion (gardin sett worked clear beens corn warm planted matters cucumbers gatherd potatoes plants ou sowd door squash wed seeds) and over the course of the diary's average year they also beautifully depict the fingerprint of Maine's seasonal cycles:

Note: this post is part of an ongoing series detailing my work on text mining Martha Ballard’s diary.